This here will work for the created workers when the scheduler. And the workers need to clone the DAG code also. Users can install only the dependencies they need for their workflows. Because the git sync container in the deployment works just for main airflow. Modular: Cosmos is designed to be modular. Users can add their own parsers and operators to support their own workflows. This allows users to update their workflows without having to restart Airflow.įlexible: Cosmos is not opinionated in that it does not enforce a specific rendering method for third-party systems users can decide whether they’d like to render their workflow as a DAG, TaskGroup, or individual task.Įxtensible: Cosmos is designed to be extensible. Seamless integration into data and developer tools like dbt, Airflow, Dagster and Prefect, as well as an intuitive UI for analysts to get started without. When workflows are defined as code, they become. They are responsible for executing the tasks in the workflow.Ĭosmos operates on a few guiding principles:ĭynamic: Cosmos generates DAGs dynamically, meaning that the dependency graph of the workflow is generated at runtime. Apache Airflow (or simply Airflow) is a platform to programmatically author, schedule, and monitor workflows. Operators: These represent the “user interface” of Cosmos – lightweight classes the user can import and implement in their DAG to define their target behavior. These are executed whenever the Airflow Scheduler heartbeats, allowing us to dynamically render the dependency graph of the workflow. Parsers: These are mostly hidden from the end user and are responsible for extracting the workflow from the provider and converting it into Task and Group objects. The values.yaml from the official Airflow Helm repository ( helm repo add apache-airflow needs the following values updated under gitSync: enabled: true repo: ssh:///username/repository-name.

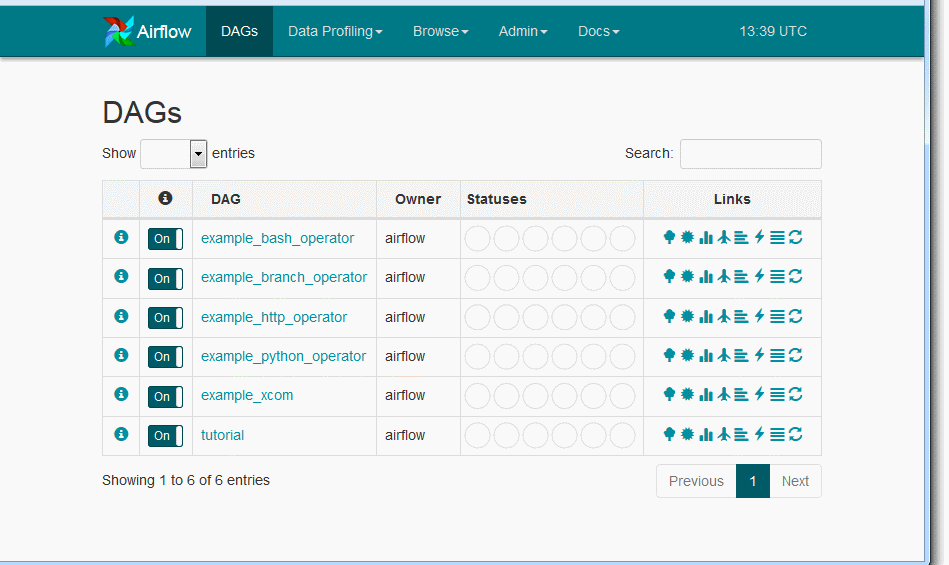

Here’s what the DAG looks like in the Airflow UI:ĭbt’s default jaffle_shop project rendered as a TaskGroup in Airflow # Principles #Īstronomer Cosmos is a package to parse and render third-party workflows as Airflow DAGs, Airflow TaskGroups, or individual tasks.Ĭosmos contains providers for third-party tools, and each provider can be deconstructed into the following components: The DbtTaskGroup operator will automatically generate a TaskGroup with the tasks defined in your dbt project. From pendulum import datetime from airflow import DAG from import EmptyOperator from _group import DbtTaskGroup with DAG ( dag_id = "extract_dag", start_date = datetime ( 2022, 11, 27 ), schedule =, ) as dag : e1 = EmptyOperator ( task_id = "ingestion_workflow" ) dbt_tg = DbtTaskGroup ( group_id = "dbt_tg", dbt_project_name = "jaffle_shop", conn_id = "airflow_db", dbt_args =, ) e2 = EmptyOperator ( task_id = "some_extraction" ) e1 > dbt_tg > e2

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed